Salesloft is a leading sales engagement platform used by revenue teams to execute prospecting and outreach at scale.

Through ongoing conversations and research with sales reps, my PM and I identified a constraint hiding in plain sight: reps couldn't work their full territory. Not because of pipeline gaps, but because manual prospecting effort made it physically impossible. The average rep was managing between 500 and 10,000 accounts but actively working fewer than 100 at any given time. We brought this to leadership as a case for AI-powered prospecting workflows.

This wasn't a small ask. A new agentic offering meant real engineering investment, a repositioning bet, and long-term roadmap commitment. The core challenge wasn't speed for its own sake. It was how to reduce enough uncertainty to make that bet confidently, before a line of code was written.

I served as Product Design Lead, driving early-stage discovery for this initiative. Setting direction, owning the research, and translating ambiguous agentic concepts into something testable.

Defined the problem space, success criteria, and key unknowns with Product before committing engineering resources.

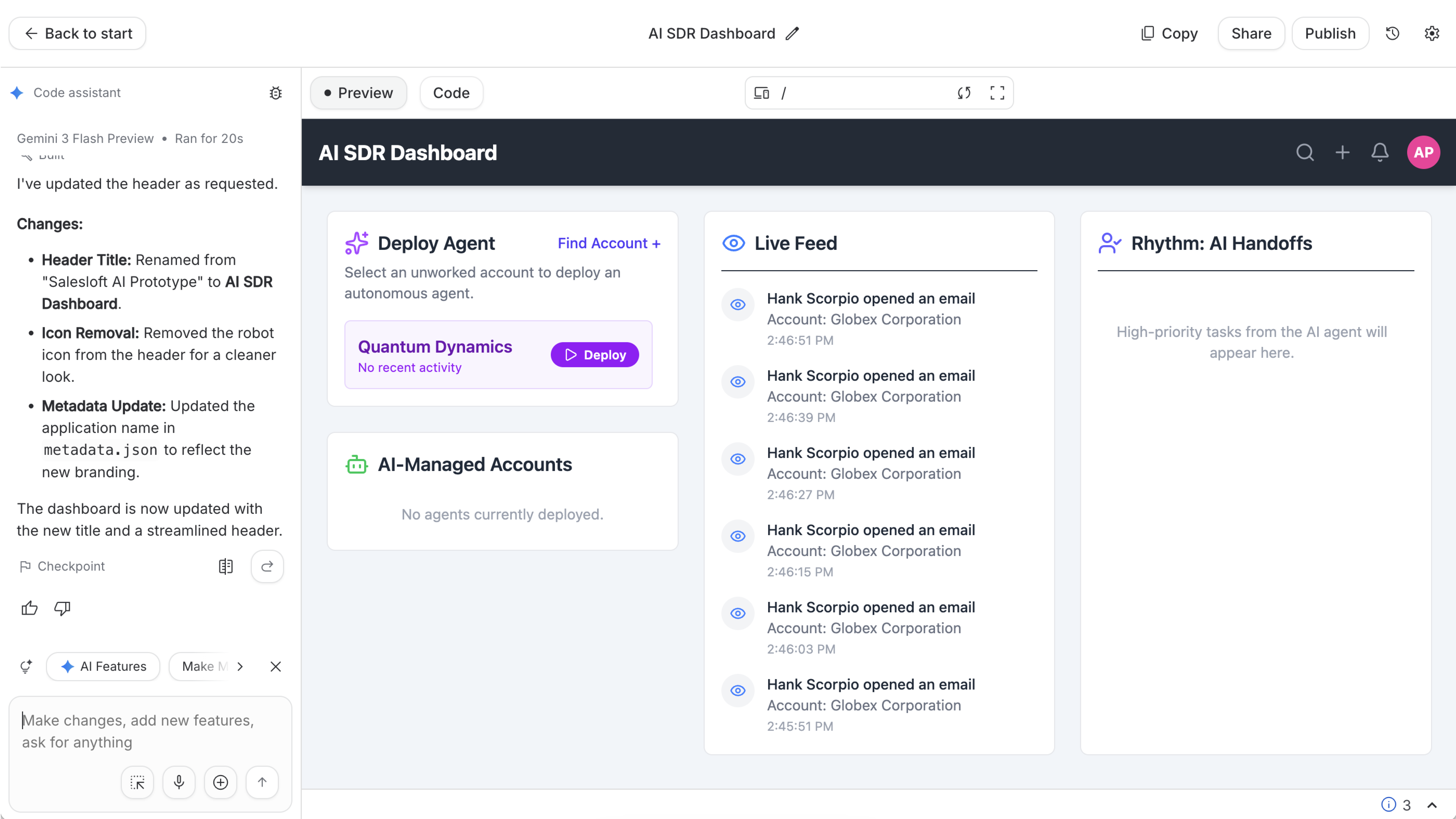

Built the prototype and led interaction design to make an abstract agentic concept testable in days, not weeks.

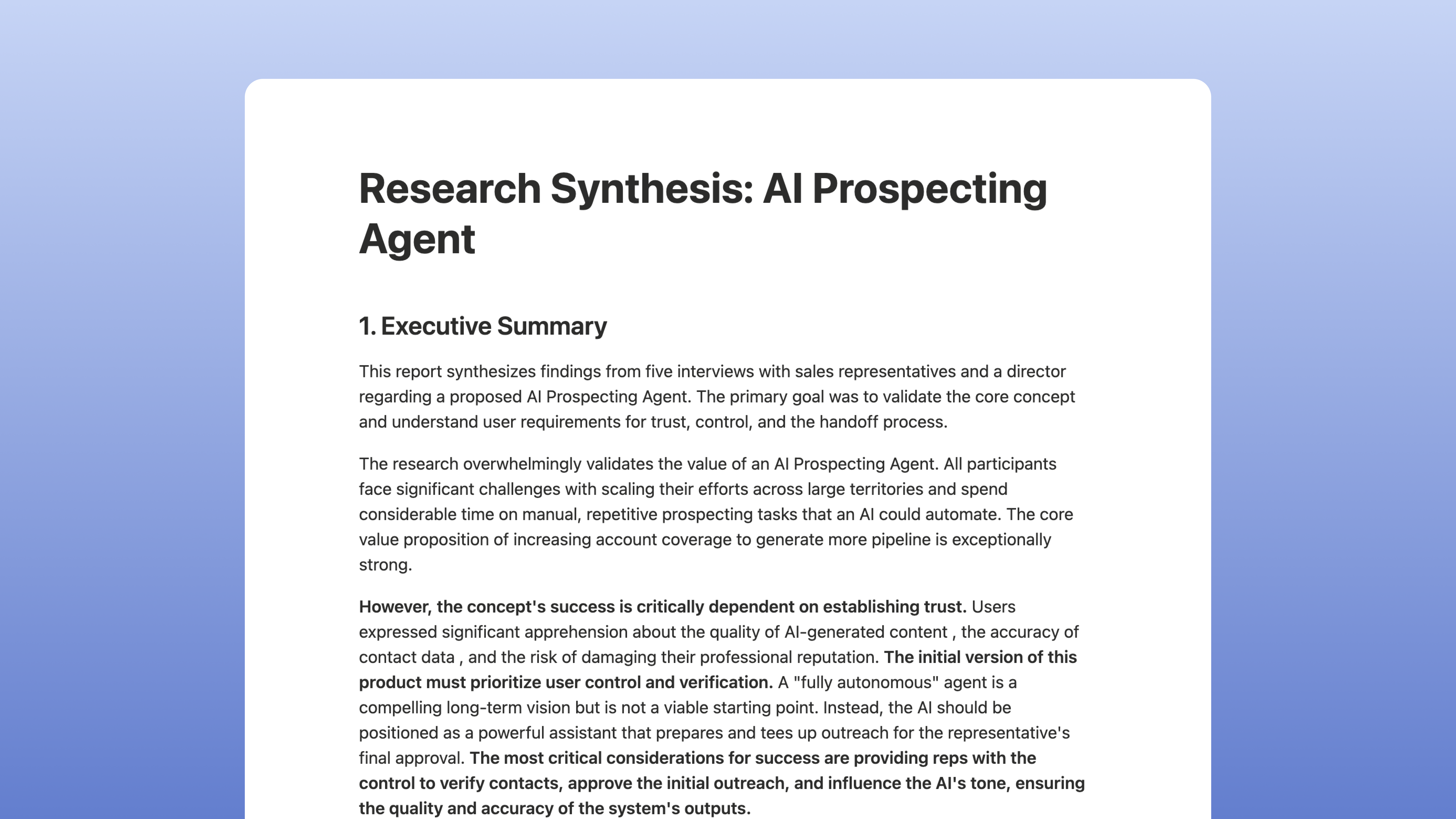

Designed and owned the research plan, synthesis, and recommendations to validate assumptions around user value, trust, and control.

Rather than focusing on shipping outcomes, my role centered on using design to create the right inputs for a high-stakes decision before execution began.

The default at Salesloft was to wait for Figma-ready mockups before validating anything. High fidelity before feedback. In a high-uncertainty environment, that's a liability.

We made a different call.

Rapid Concept Translation

My PM and I built the prototype together on a Zoom call. No handoff, no waiting. Using generative AI tools, we went from blank canvas to testable workflow in hours. Not polished. Good enough to put a real workflow in front of users and get a real reaction. We were debating edge cases the same day, before assumptions had time to harden.

Focused Validation

I built a research plan around the assumptions that carried the most risk, not the ones easiest to test.

- Would users trust an AI-driven prospecting workflow?

- Where did they expect control versus automation?

- What would make it feel reliable rather than risky?

The PM scheduled five interviews that same week. No engineering blocked. No design handoff required.

Joint Discovery & Synthesis

We ran interviews together so there was no translation layer between research and product decisions. I led synthesis using a MoSCoW framework to separate what users actually needed from what would have been easy to build and easy to overlook.

The concept: an AI Prospecting Agent that works a rep's unworked territory, handles initial outreach, and hands off the moment a prospect engages. Not a feature. A new category of workflow.

One rep put the problem plainly:

"I have 1,300 accounts. I'm working 100. That's not really good enough."

— Senior Commercial Account Manager

Concept confirmed in under one week

The territory gap was universal. Hundreds to thousands of accounts per rep, fewer than 100 actively worked. The correlation between accounts touched and revenue closed made the value case straightforward. Demand was not the question.

Changed how discovery gets done

Low fidelity, fast to test, hypothesis-driven. This became the reference point for early-stage AI discovery at Salesloft.

Changed the product decision

Trust was. Users didn't resist the concept. They resisted handing their professional reputation to a system they couldn't yet verify. One rep said it directly: "It's scary to give that, and then have a whole entire impression of this market that we're not good salespeople."

That finding changed leadership's call. Full autonomy became a co-pilot MVP. The research didn't just validate the concept. It reshaped it.

The output was not a shipped feature. It was a validated direction with enough evidence behind it that engineering could start with confidence.

From Design, we delivered:

A validated agentic concept, user-confirmed and ready to inform the PRD and roadmap.

A functional prototype used for stakeholder alignment and research sessions — no engineering required.

A research synthesis translating user feedback into clear, prioritized requirements.

This allowed Design to operate upstream of execution, accelerating delivery while reducing rework and ambiguity.

The most powerful use of AI in product development isn't speed. It's clarity.

The harder lesson was about fidelity. There is a version of design rigor that looks like thoroughness but is actually fear. Waiting for perfect mockups before talking to users isn't process. It's protection. What this project proved is that a low-fidelity prototype that answers the right question is worth more than a polished one that answers the wrong one.

I carry that forward on every early-stage initiative now. Get it testable. Get it in front of people. Let the evidence do the work.